The influence of disinformation in Georgia and AI tactics to counter it

A Georgia Excursion Report

By Alexi Mikluha, Kinga Petho and Quinty Sami

This is the first publication of the Georgia series. From 23 February to 3 March 2025, 8 SSMS students went on a research excursion to Tbilisi, Georgia, to conduct field research on a chosen topic.

Introduction

The contemporary world is faced with a multitude of challenges and one of the most notable ones include information warfare. Particularly, the fight against disinformation with the spread of artificial intelligence (AI) is becoming increasingly tedious to counter. As technology evolves, it is crucial for nation-states to be capable of mitigating such threats. First, we must distinguish between propaganda, misinformation, and disinformation. Propaganda can be defined as the favorable interpretation of information by and for governments, media, and corporations, which can mislead and influence public opinion (Urushadze & Kiknadze, 2021, p. 71). Misinformation can be operationalized as the unintentional spread of information (Chadwick & Stanyer, 2021). Contrastingly, disinformation as a concept can be referred to as the intentional creation and dissemination of false and/or deceptive information (Hameleers, 2022). This report will encompass the influence of disinformation in Georgia and how AI can approach it by first explaining the historical context of Georgia in its 2008 war, secondly, the Georgian Dream’s evolution, public perception of disinformation, our survey results, and finally, the role of AI in battling disinformation.

Methodology

This paper consists of data collected through various means, such as visits to organizations in Georgia, an interview with an expert in the field, desk research, and a survey.

During the research excursion to Tbilisi, Georgia, information on the topic was gathered through visits to organizations such as embassies, a think tank, a university, the European Union, and a news organization. This provided multidisciplinary insights from experts with a close view and understanding of the research topic. Not all organizations are directly mentioned in the paper to protect them from exposing their government-opposing views.

Another way of qualitative data gathering was conducting an expert interview. The aim of the interview was to gather insights on the specific characteristics of disinformation in Georgia. Therefore, the interviewee was selected based on their expertise in this field. The team reached out to numerous experts through Google Scholar and LinkedIn, and the final interview featured Dr. Nina Shengelia, who gave her permission to be named in the report. Dr. Shengelia is an expert (among others) in social media, legal technology, and digital human rights and was interviewed about the topic of disinformation on Georgian media platforms. The interview was conducted online through Microsoft Teams and was later transcribed using the transcription tool of Teams. The research team expresses their greatest gratitude to her for these insights.

To collect quantitative data, an online survey was conducted to measure the Georgian public perception of disinformation to add additional insights to the paper. The target group for the survey is Georgians who are currently residing in Georgia. Due to the specific target group, the responses were limited during the data collection process. We realize that, because of the limited respondents, our data holds no true statistical value, as we did not reach the minimum sample size. However, as most of the respondents were students from the Georgian Institute of Public Affairs, we felt it was still valuable and relevant to include the results of our survey to showcase their perception and influence regarding disinformation in Georgia. The survey consisted of eleven questions categorized into four sections: firstly, questions on preferred news sources. Secondly, questions on the frequency of encountering disinformation. Thirdly, questions on the effectiveness of disinformation, and lastly, questions on the respondents’ reaction to disinformation. Some of the questions were aimed at filling in the gaps in research; other questions were to determine if the responses would be consistent with the desk research. The survey administration consisted of two phases. The first phase was the data collection, in which we advertised the survey through LinkedIn, reaching out to organizations and through word-of-mouth during the excursion in Georgia. The second phase of the survey administration consisted of data analysis. The data has been analyzed through the analytical tool Microsoft Excel, and graphs have been made for the purpose of data visualization.

Chapter 1: Historical context

1.1: Russo-Georgian War of 2008

Historical trends have demonstrated how Russia employs hybrid war tactics such as disinformation to undermine governments such as Romania and Chechenia. The deployment of unauthorized Russian mercenaries was reported in various moments. For example, during the time of the Minsk Accords, Russia illegally occupied Ukrainian territory and attempted to control at least six other regions even after signing the deal (Bugayova, 2025). This tactic showcased Russia’s ability to undermine international law in a fast manner (Moore 2019). Notably, in the case of the Russo-Georgian war of 2008, Russia deployed an arsenal of disinformation and propaganda tactics. For instance, they shifted and blamed Georgia as responsible for Russian aggression, thereby breaking the two countries’ 2005 peace agreement. This lack of security guarantees allowed the Kremlin to intervene militarily, a situation which is frighteningly comparable to Ukraine (Seskuria, 2024).

Additionally, it is reported that Russia sent pro-Russian reporters to control the narrative in and out of the Georgian region. Human Rights Watch deemed the ‘killing of Russian peacekeepers’ to be extremely unlikely. It is therefore more likely for Russia to have lied about this in order to manipulate Russians into retaliation. Indeed, disinformation has been proven to be an effective foreign policy instrument due to the fact that it leads to reinforced confirmation bias by providing individuals with information they already believe, and newer information will be less trusted. Plus, it is easier to spread false lies or rumors, which are more emotional and intrigue mass populations during conflict. This trend is present also in Ukraine when Russia altered its statistics of mortality rates to appear to be stronger. In addition, Russia employed ‘passporterization’, referring to the mass distribution of passports to gain control over a population and homogenize them with Russia (IDFI, 2022a).

Ever since the crisis in 2008 and the Ukraine war, Georgia has faced relentless pressure from foreign actors such as Russia. It is stated that 20% of Georgia is occupied by Russia, comprising of the regions known as Abkhazia and South Ossetia (Brender, 2024a, p. 5). Additionally, Georgian Dream (GD) representatives have criticized past Georgian leaders for the 2008 crisis instead of Russia, which reinforces the view that Russia is the culprit for spreading disinformation in Georgia (Sikharulidze, 2025a).

1.2: The Georgian Dream

1.2.1: Introduction to the Georgian Dream

The Georgian Dream is the ruling party of Georgia, founded by billionaire Bidzina Ivanishvili in 2012. Since then, the Georgian Dream (GD) has ruled Georgia in a tense political climate. Claims from chair of the party include that ‘a global force’ is responsible for the world’s conflicts with Russia and NGOs are ‘doing their bidding’ (Gavin, 2024). It has faced numerous shifts in power, and although it followed a pro-Western ideology, it has displayed increased signs of authoritarianism in contemporary times (Suny et al., 2025). Notably, through the enactment of its ‘foreign agents’ law’, GD aims to restrict civil society’s role and influence in Georgia. Under the pretext that the West and its institutions, such as NATO and the EU, are interfering with Georgian affairs, this law also holds Russian origin and poses a grave threat to Georgia’s democracy. Language employed by the party to allude to the West includes: the ‘deep state’ and the ‘global war party’ (Courtney, 2024). This section will aim to define firstly, explain Georgia’s history of disinformation, especially during the 2008 Russo-Georgian War, and secondly, the Georgian Dream and its ascension.

1.2.2: The Georgian Dream’s Rise to Power

According to an academic professional, the government is seeking to ‘consolidate its power in the form of a dictatorship’. He continues by elaborating that the Georgian government is displaying anti-EU propaganda and aligning itself with Russia. Similar to Viktor Orban’s Hungary, the Georgian Dream’s Georgia aims to consolidate and maintain power. For instance, the GD has systematically marginalized opposition parties and established loyal mass media outlets. Consequently, polarisation of Georgian society becomes imminent. Through its foreign agents law, Georgian Dream has affected roughly 30 thousand NGOs in Georgia who are receiving 20 percent or more funding from outside Georgia. The Asian Development Bank additionally explains that civil society organizations are 90% funded from abroad (Goedemans, 2024). Statistically, over 400 organizations in Georgia are refusing to register themselves as foreign agents (Kucera, 2024). Targeting the revenue of opposing parties such as civil society seems like a viable strategy to secure a win for the Georgian Dream in the electoral process (Dhojnacki, 2024). A Russian article refutes that 1% of NGOs are ready to receive ‘foreign agent’ status and claims instead that 1% or less of organizations do not trust the law. Due to heavy bias, it is unclear whether or not this article states the truth. (Petrova, 2024)

Accusing Europe of starting a front against Russia on its own grounds, The Georgian Dream has been responsible for leading disinformation campaigns against the European Union (IDFI, 2022b). Under the guise of preserving traditional and cultural values, GD holds a firm stance that EU guidelines are ‘unethical’ and ‘inconsistent’ despite receiving economic and democratic contributions from Brussels (Top Chubashov Center, 2023). Russian conspiracies involve majoritarly anti-Western rhetoric, which the Georgian Dream also involves itself in (Chanadiri, 2024). The GD calls for sovereign democracy, which is directly linked to Russia’s framework of promoting state-centered control and rejecting Western models of governance (Sikharulidze, 2025b). In order to gain full EU membership, Georgia would need to undergo commitment to ‘deoligarchization’ and eliminate excessive influence of interests in Georgia (Portal, 2024).

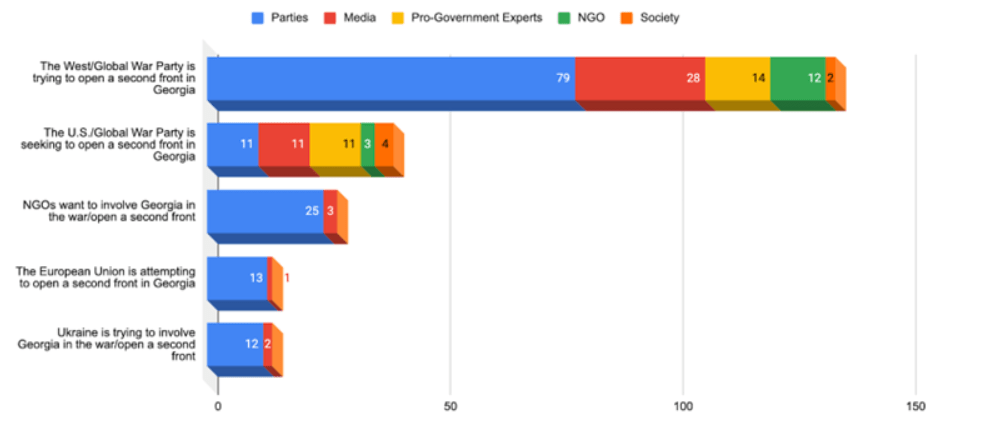

Figure 1. Chart of Messages related to the Global War Party and the second front (July-September 2024) (EDMO, 2024)

Only a small segment of the population believes that the ‘global war party’ otherwise known as the West, is trying to start war in Georgia.

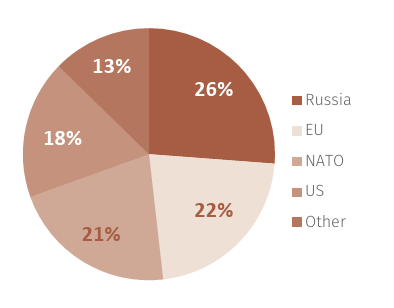

Figure 2. Quantitative distribution chart of Facebook posts from disinformation pages 2017-2018 (Nahzi et al., 2019, p. 11)

Disinformation spread by the Georgian Dream is affecting social media heavily.

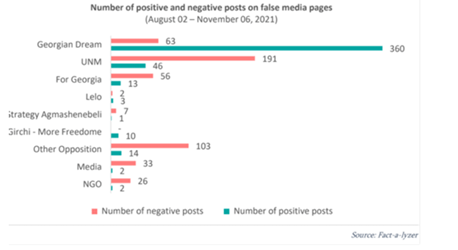

Figure 3. Distribution of positive and negative posts on fake media pages (International Society for Fair Elections and Democracy (ISFED), n.d.)

Between 2021 and 2023, Transparency International Georgia reported a 47% increase in Georgian economic dependency on Russia. Approximately 20 Russian-leaning actors are present in Georgia and around 25 thousand Russian companies have been registered there. This foreign influence poses threats to the nation of Georgia (Brender, 2024b, p. 5).

Georgian President Zourabichvili has indicated concerns about Russia’s increasing presence in Georgia and waging a propaganda war (Team, 2024). She refers to the situation in her country as facing a “Russian special operation—a new form of hybrid warfare” (Congress, 2024). In fact, the European Union Monitoring Mission for Georgia (EUMM) has been a target of disinformation from the Kremlin (The University of Georgia, 2019). Nowadays, a significant part of the Georgian population still believes that the collapse of the Soviet Union was detrimental to Georgia (Anjaparidze, 2024).

A speaker from a think tank organization claimed that the older population of Georgia, who were born and raised in the Soviet Union, are against the protests for EU integration, and they are typically part of a lower income class. On the other hand, the speaker explained that the highly educated and young population are protesting or supporting the protests for becoming part of the EU. An estimated 30 percent of the population listens to one Russian-sponsored television channel per week (Transparency International Georgia, 2023, p. 17).

What do respondents think causes disinformation in Georgia?

The research team asked three Georgians what they believe causes disinformation in Georgia. Within the group, one student lived in Georgia and is currently studying at The Hague University of Applied Sciences, and two are living in Georgia. One respondent claimed that “the media is bought out and will freely lie if they need to”. Another answer was that many internet trolls enjoy spreading disinformation; referring to ‘troll farms’ or coordinated inauthentic behavior of online users (Neal, 2024). Finally, the last respondent stated there are few credible sources that people can use to combat disinformation.

Chapter 2: The influence of disinformation on public perception in Georgia

2.1: Platforms of disinformation

Disinformation has been found on Georgian governmental websites, social media, and on the streets. Through different platforms and means of distributing disinformation, it is ensured to reach various groups of the population. Hereby, younger generations see disinformation through social media, whereas older generations, some with limited internet access, will see it on the street. To reach more remote places in the countryside, disinformation can also be found on local radio stations. In an attempt to influence the public perception, these disinformation campaigns contain a message of anti-European Union, Europe, NATO, NGOs, the “West,” liberalism, and the United States. During the campaigns shortly before elections, videos were shown in public that displayed two sides. One side of the video showed bombing and destruction in Ukraine, and the other side showed peaceful images of Georgia. These videos aimed to make the viewer compare the two perceived “options” of either war or peace to aim and sway the viewer to vote a certain way. As if to say, “Pick what you want.” The Rondeli organization states that the tactic behind disinformation is not to tell a complete lie but to base your message on truth while manipulating or framing the context. Moreover, the new media law, implemented in May 2024, makes it difficult to be non-biased and get funding (ECNL, n.d.).

2.2: Effects of disinformation

The disinformation campaigns have caused a change in opinion amongst the population of Georgia. This was proven when, according to the Dutch embassy in Tbilisi, 20% of the election voters were affected and influenced by disinformation. Moreover, 43% of the population perceived the election to have been unfair and influenced. Voters stated they had experienced intimidation and pressure to vote in a certain way during the elections. According to journalists, an example of evidence of electoral influence includes the fact that more votes than voters were counted.

2.3: Factors influencing vulnerability to disinformation

Studies have shown that certain factors influence your vulnerability to disinformation. An example of these factors is the age of the subject. This is also shown in Georgia, where statistics have shown that older generations are more likely to believe the disinformation, as they are more prone to blindly trust the government. Moreover, the older generation is more prone to agree with the message of disinformation, as they tend to look back on the “good old days of the Soviet Union”, according to the expert interviewed. The elderly are more prone to be against the European Union because of their experience in the USSR; they also argue that the younger generation does not fully realize what it will mean for Georgia to join and fully integrate into the European Union.

Another determining factor is the geographical location within the country. The Dutch embassy states that compared to Tbilisi, where a large part of the population resides, the people in the countryside are more prone to believing disinformation, as for most of them, all their platforms are from the government and therefore censored. Additionally, the people in the countryside are more financially dependent on the government and therefore less likely to disagree with them, according to the Dutch embassy.

According to the Rondeli organization, statistics have also shown that in Georgia, younger and higher-educated people are more likely to protest on the streets against the government. Maisi News argued that while it is true more young people are actively against the government, the reason why the majority of the protesters are from the younger generation is because of the harsh police interventions from violent police groups. Other repercussions of protesting include steep fines and getting fired from your job.

The influence of these factors on the vulnerability to disinformation differs per country (Gozgor, 2021). For example, in some countries, higher education shows more criticism towards the government, whereas in other countries, higher education leads to higher trust levels. In some countries, sex is a determining factor as well; for example, in Japan and the United States, males are more likely to trust the government (Gozgor, 2021).

2.4: Result of the survey

2.4.1: News source

(The percentages do not add up to 100% because multiple answers could be selected in the survey.)

Within the sample, 86.4% of respondents, the vast majority, have news agencies as their primary news source. 40.9% get their news from content creators on social media. Moreover, 36.4% watch the news on television, and 4.5% claim to get their news from civil society groups such as Daitove. No respondents selected the radio or newspaper as their source of news. As to why they use this specific source as their preferred source of news, most answered with arguments such as “convenience” and “reliability”.

2.4.2: Shift in political opinion

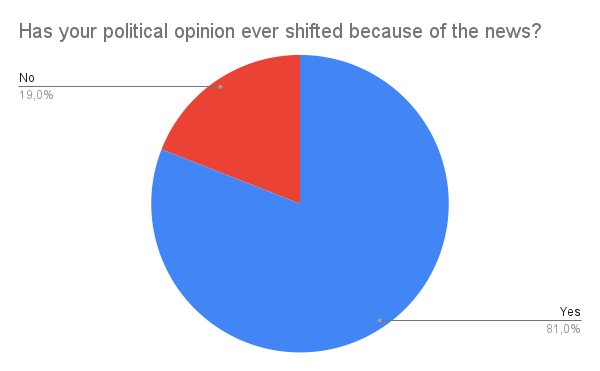

The figure below shows the responses to the question of whether the respondents’ political opinion has ever shifted because of the news. In which the majority, 81%, answered yes; their political opinion has changed because of the news and its contents.

Figure 4

2.4.3: Disinformation on social media

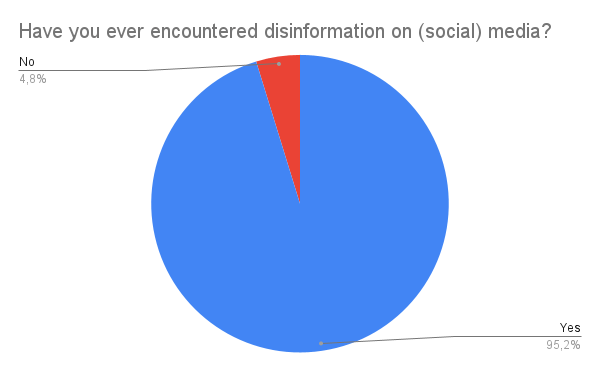

In response to whether they encounter disinformation in the media, 95.2% of the respondents say they have.

Figure 5

When asked what kind of disinformation is encountered in media, the respondents say it mostly consists of incorrect information, misleading content (which is often framed or missing context), clickbait, and AI-generated content such as deepfakes.

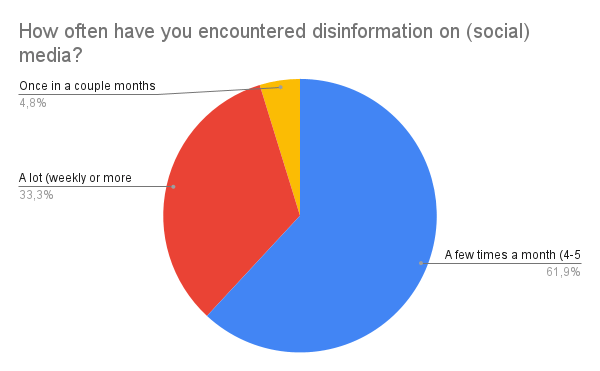

2.4.4: Frequency of disinformation on media

The figure below showcases how often the respondents encounter disinformation in media. With the frequency options consisting of “once in a couple of months”, “a few times a month”, and “a lot (weekly or more)”. From our sample, 61.9% answered they encounter disinformation on media a few times a month. Moreover, 33.3% encounter disinformation more frequently, claiming the frequency is weekly or more.

Figure 6

In the survey, the respondents were asked on what platforms they encounter disinformation. The answers consist of media platforms such as TikTok, Instagram, X (formerly Twitter), Facebook, and YouTube.

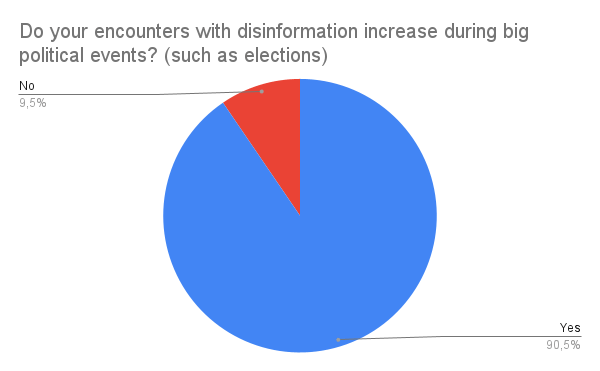

2.4.5: Shifts in disinformation frequency

On the question of whether, in their perception, disinformation increases during big political events, 90.5% answer that it does.

Figure 7

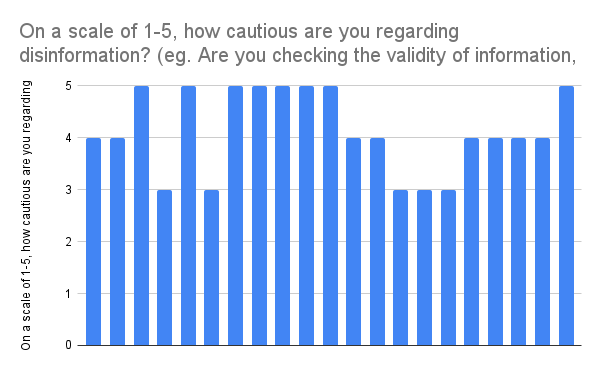

2.4.6: Caution regarding disinformation

Respondents were asked to rate, on a scale of one to five, their caution regarding disinformation. Caution can consist of checking the validity of the source and double-checking by looking and comparing the content with other sources. On the figure below, it is shown that the lowest rating is a three out of five on the scale of caution. Most respondents rated their caution with either a four or five out of five on the scale. This figure shows the respondents display high levels of caution.

Figure 8

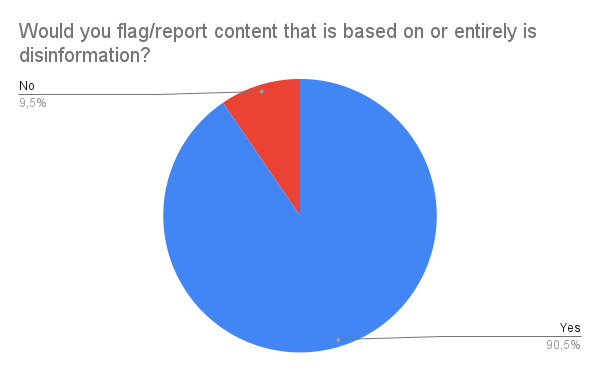

2.4.7: Reporting disinformation

Lastly, the respondents were asked if they would flag or report disinformation content. The majority, 90.5%, claim they would flag or report the content, as is seen in the figure below. Upon the question of whether they would switch to a different platform if they knew the information/content was biased, propaganda, or disinformation, all the respondents answered, claiming they would. Meaning that while not all respondents would report disinformation, they would all try to counter it by looking for a more reliable and unbiased source.

Figure 9

Chapter 3: The role of artificial intelligence in battling disinformation

This section seeks to first describe the general implication of artificial intelligence in disinformation, together with already existing tactics for countering disinformation. Later, it will highlight the specific constraints in battling government-endorsed disinformation in Georgia. Additionally, it aims to provide an overview on how artificial intelligence can be helpful in bridging these gaps based on its unique applicability.

3.1: The role of artificial intelligence in disinformation

Artificial intelligence (AI) has significant influence on disinformation campaigns, either through positive or negative implications. Over the past years, AI has changed the production and distribution of disinformation mainly through the creation of falsified content and increasing the magnitude and reach of disinformation (Bontridder, Poullet, 2021). However, AI also has several positive implications in battling disinformation, such as verifying the validity of information or providing a systematic approach to handle large magnitudes of information (Kertysova, 2019).

Creating falsified content through AI has several means. Helmus (2022) lists the following technologies: deepfake visuals, voice cloning, and curated texts. A common trait of the former two tactics is that they create and display a visual that has been significantly altered through generative adversarial networks. The latter technology utilizes language computer models to artificially create human-like texts (Helmus, 2022).

The increased spread of disinformation is not solely the “merit” of artificial intelligence. Increased global connectivity through social media allows information to travel less filtered and more rapidly (Benzie, Montasari, 2022). Additionally, as shared by a trusted expert on site, by introducing the option to share content with one’s own social network, information appears more personal and trustworthy. However, artificial intelligence successfully leverages these characteristics of social media. Hajli et al. (2022) discovered that automated social bots in social networking services play a prominent role in spreading misleading content.

3.2: Tactics to counter disinformation

Several tactics exist to counter disinformation. Multiple approaches are available depending on adversary techniques and the state of the disinformation campaign: reactive, which entails actively countering disinformation, proactive, which focuses on education and improvement of critical thinking skills, or passive, which advocates for not engaging with false content (Dowse & Bachmann, 2022).

Reactive approaches encounter tactics that are aimed at reducing or correcting false information (Dowse & Bachmann, 2022). Zacky and Zacky-Eze (2025) recommended fact-checking and general disinformation detection as the most effective methods to actively counter disinformation. In their recommendations, they highlight the effectiveness of Natural Language Processing (NLP) technologies in detecting characteristics of disinformation. In addition, Bontridder and Poullet (2021) listed techniques such as filtering of content, blocking of content, and deprioritizing content as effective methods in regulating disinformation on online platforms.

Proactive approaches promote the role of education in battling disinformation, as a population with critical thinking skills and a higher media literacy is less susceptible to misleading information (Bontridder & Poullet, 2021). Experts in the field of media literacy education highlighted the importance of inter-age education approaches, as most research targets their recommendations at children and young adults (Rasi et al., 2019).

3.3: Constraints of battling disinformation in Georgia

For the following section, experts from the domains of academia, civil society work, and public policy were consulted. Their opinions were consensual in highlighting the issues of complex disinformation networks, scarcity of resources to fight disinformation and deliberate hindrance of fact-checkers by the government.

For the remaining part of the report, disinformation networks will describe the trail the information follows and the stakeholders who are involved with the distribution. One issue that was highlighted by multiple interview partners was the long and inconsistent trail the information follows before reaching its intended audience. As expressed by Dr. Shengelia, it is difficult to verify who sponsors the information, and what steps it went through before reaching users. Fact-checking organizations who seek to verify the root source of information online need to follow back an unusually long trail of steps, which is further complicated by the fact that a fraction of information is based on subjective opinions.

Additionally, while structural measures exist to counter disinformation on social media platforms, these mostly concern online media outlets, not individual users. Disinformation posted by individual users might be harder to detect due to the magnitude of accounts that require surveillance, and they also might appear more trustworthy for other users, the information would come from regular people as “housewives, students, or school pupils”.

As discussed by interview partners at a research organization, anti-messaging against propaganda was gradually taken over by members of the authority, who began to spread propaganda and disinformation themselves. By this, they not only hindered fact-checking missions but also forced civil society actors and watchdog organizations to find new platforms and resources for their activities. The government is also limiting these efforts by imposing restrictions on collaboration with foreign expert organizations and by subjecting them to political impediments. These involve investigations, coercion, and fines.

The structural means to battle disinformation are also shrinking. Recently, Mark Zuckerberg announced that Meta will suspend the use of fact-checkers (Kaplan, 2025). This measure will start in the US, but the company aims to spread this measure worldwide. As it was highlighted during the expert interview, this would erase the platform of Georgian civil society actors to report disinformative content. On the national level, the Georgian government is drafting a legislation for social media regulation, however, multiple voices expressed concerns over the potential censorship capabilities this would entail.

Apart from these circumstances, the magnitude and nature of information also poses a challenge. Parties who are interested in spreading disinformation utilize bots to ensure a fast and non-transparent spread of information. As these arguments encompass a mix of false, partially valid, and valid information, selecting and correcting false statements is overly time-consuming. Visual content is majorly falsified through the use of artificial intelligence, and as these programmes become more sophisticated, it is also difficult to determine whether visuals were falsified or not in the first place. The distortion of content might not even be visible for many internet users, as usually a couple minor details are changed, which are overlooked by average consumers.

3.4: Potential countermeasures

It has been emphasized during multiple conversations that effective battling of disinformation requires a holistic and sustainable approach of education, social resilience and awareness. However, due to the sensitivity of the situation, it is also desirable to consider immediate measures.

As discussed in the previous segments, artificial intelligence was proven useful in the battling of disinformation, as it is able to handle large magnitudes of data and analyzes content in a systematic manner that might extend the capabilities of a human fact-checker. Having analyzed the special characteristics of disinformation in Georgia, it can be concluded that artificial intelligence can be considered as a potential countermeasure.

Based on the above-described characteristics, a potential AI-tool needs to fulfill the following duties: analyzing information trails, platform-based disinformation detection, and information correction. Natural language processing (NLP) tools are currently being tested for disinformation detection, as they are able to recognize false narratives based on their programmed perception of “fake” and “real”. Such NLP models operate based on a mathematical solution that assigns a value to the piece of information it analyses based on instructions that it receives from its feature space. In this context, features describe the criteria against which a piece of information is evaluated. In addition to this, machine learning algorithms might be complementary in correcting the detected disinformation (Skolkay & Filin, 2019).

To develop an NLP model, clear determinants of false narratives need to be defined. Independent actors of Georgian civil society need to determine what criteria need to be fulfilled for information to be classified as fake, and design the mathematical model based on those characteristics. A guideline on such criteria was proposed by Skolkay and Filin (2019), who listed text analysis, speech tagging, and grammar parsing as pointers.

Such a tool would be applicable to the work of fact-checkers and watchdog organizations in Georgia, who are currently hindered by political and economic constraints. This solution would be able to scan the online sphere with greater speed and accuracy, while simultaneously preserving the anonymity of individual fact-checkers, thus enhancing their safety online.

Reference List

Anjaparidze, Z. (2024). Russian Influence in Georgia Ahead of Critical Elections – Jamestown. Jamestown. https://jamestown.org/program/russian-influence-in-georgia-ahead-of-critical-elections/

Anti-Western Propaganda and Disinformation Amid the 2024 Georgian Parliamentary Elections – EDMO. (2024). https://edmo.eu/publications/anti-western-propaganda-and-disinformation-amid-the-2024-georgian-parliamentary-elections/

Bontridder, N., & Poullet, Y. (2021). The role of artificial intelligence in disinformation. Data&Policy, 3:32. Cambridge University Press. https://www.researchgate.net/publication/356549065_The_role_of_artificial_intelligence_in_disinformation

Brender, R. (2024a). Georgia at a Crossroads: An Increasingly Illiberal Domestic Policy is Becoming an Obstacle to EU Accession. In EGMONT POLICY BRIEF (Nummer 350). https://www.egmontinstitute.be/app/uploads/2024/07/Reinhold-Brender_Policy_Brief_350_vFinal.pdf?type=pdf

Brender, R. (2024b). Georgia at a Crossroads: An Increasingly Illiberal Domestic Policy is Becoming an Obstacle to EU Accession. In EGMONT POLICY BRIEF (Nummer 350). https://www.egmontinstitute.be/app/uploads/2024/07/Reinhold-Brender_Policy_Brief_350_vFinal.pdf?type=pdf

Bugayova, N. (2025). Institute For The Study Of War. https://www.understandingwar.org/backgrounder/lessons-minsk-deal-breaking-cycle-russias-war-against-ukraine

Chanadiri, N. (2024). Awakening totalitarian traditions: Russian disinformation in the lead-up to the Georgian elections. Rondelli Foundation. https://gfsis.org/en/awakening-totalitarian-traditions-russian-disinformation-in-the-lead-up-to-the-georgian-elections/

Congress. (2024). Georgia: Incumbent Georgian Dream Claims Victory in Disputed Parliamentary Elections. In Congress.gov. https://www.congress.gov/crs-product/IN12456

Courtney, W. (2024, 24th of June). Georgian Epiphany. RAND. https://www.rand.org/pubs/commentary/2024/06/georgian-epiphany.html

Chadwick, A., & Stanyer J. (2021). Deception as a Bridging Concept in the Study of Disinformation, Misinformation, and Misperceptions: Toward a Holistic Framework. (2021). https://academic.oup.com/ct/article/32/1/1/6406430

Dhojnacki. (2024, 13th of December). Why Georgia’s ruling party is pushing for the foreign agent law—and how the West should respond – Atlantic Council. Atlantic Council. https://www.atlanticcouncil.org/blogs/new-atlanticist/why-georgias-ruling-party-is-pushing-for-the-foreign-agent-law-and-how-the-west-should-respond/

Dowse, A., Bachmann, S. D. (2022). Information warfare: methods to counter disinformation. Defense & Security Analysis, 38(4), 453-469. https://www.tandfonline.com/doi/full/10.1080/14751798.2022.2117285

Gavin, G. (2024, 19th of May). Freemasons and ‘global war party’ conspiring against Georgia, ruling party claims. POLITICO. https://www.politico.eu/article/freemasons-global-war-party-conspiring-georgian-dream-party-claims-russia-ivanishvili/

Georgia: What does the Implementing Regulation of the Law on Transparency of Foreign Influence bring? (n.d.). ECNL. https://ecnl.org/news/georgia-what-does-implementing-regulation-law-transparency-foreign-influence-bring

Goedemans, M. (2024, 21st of August. What Georgia’s Foreign Agent Law Means for Its Democracy. Council On Foreign Relations. https://www.cfr.org/in-brief/what-georgias-foreign-agent-law-means-its-democracy

Gozgor, G. (2021). Global Evidence on the Determinants of Public Trust in Governments during the COVID-19. Applied Research in Quality Of Life, 17(2), 559–578. https://doi.org/10.1007/s11482-020-09902-6

Hajli, N., Saeed, U., Tajvidi, M., Shirazi, F. (2022). Social Bots and the Spread of Disinformation in Social Media: The Challenges of Artificial Intelligence. British Journal of Management, 33, 1238-1253. https://onlinelibrary.wiley.com/doi/abs/10.1111/1467-8551.12554

Hameleers, M. (2022). Disinformation as a context-bound phenomenon: toward a conceptual clarification integrating actors, intentions and techniques of creation and dissemination. (2022). https://academic.oup.com/ct/article/33/1/1/6759692?login=false

Helmus, T. C. (2022). Artificial Intelligence, Deepfakes, and Disinformation. RAND Corporation. https://www.rand.org/pubs/perspectives/PEA1043-1.html

Institute for Development of Freedom of Information (IDFI). (2022.-a.). How Russian disinformation tactics were utilised in the context of the 2008 5-day war. https://idfi.ge/en/how_russian_disinformation_tactics_were_utilised_in_the_context_of_the_2008_5_day_war?u

Institute for Development of Freedom of Information (IDFI). (2022.-b). How Russian disinformation tactics were utilised in the context of the 2008 5-day war. https://idfi.ge/en/how_russian_disinformation_tactics_were_utilised_in_the_context_of_the_2008_5_day_war?u

International Society for Fair Elections and Democracy (ISFED). (n.d.). Elections & Disinformation: Monitoring online democratic discourse in Georgia. https://www.ndi.org/sites/default/files/Updated%20format%20-%20Monitoring%20Online%20Democratic%20Discourse%20in%20Georgia%20Case%20Study_%20International%20Society%20for%20Fair%20Elections%20and%20Democracy%20%28ISFED%29__Final.pdf

Kaplan, J. (2025). More Speech and Fewer Mistakes. Meta. https://about.fb.com/news/2025/01/meta-more-speech-fewer-mistakes/

Kertysova, K. (2019). Artificial Intelligence and Disinformation. How AI Changes the Way Disinformation is Produced, Disseminated and can be Countered. Security and Human Rights, 29, 55-81. https://www.researchgate.net/publication/338042476_Artificial_Intelligence_and_Disinformation

Kucera, J. (2024, 24th of August). Defying controversial “Foreign-Agent” law, Georgian NGOs are ready to fight. RadioFreeEurope/RadioLiberty. https://www.rferl.org/a/georgia-ngos-foreign-agent-law/33090157.html

Moore, C. (2019) Russia and Disinformation: The Case of the Caucasus. https://www.crestresearch.ac.uk/resources/russia-and-disinformation-the-case-of-the-caucasus-full-report/

Nahzi, F., Shota Gvineria, Hasan Davulcu, & Kaleigh Schwalbe. (2019). Social Media Monitoring and Analysis Final Report. In Arizona State University’s (ASU) McCain Institute For International Leadership. https://eprc.ge/wp-content/uploads/2022/02/disinfo_a5_eng_final_.pdf

Neal, W. (2024, 24 May). NATO Helped Georgia Counter Russian Trolls. Then the Strategy Backfired. New Lines Magazine. https://newlinesmag.com/reportage/nato-helped-georgia-counter-russian-trolls-then-the-strategy-backfired/

Petrova, M. (2024). How many NGOs registered as foreign agents in Georgia? Vestnik Kavkaza. https://en.vestikavkaza.ru/news/How-many-NGOs-registered-as-foreign-agents-in-Georgia.html

Portal, L. (2024). Georgia’s path towards Europe and the West. https://www.blue-europe.eu/analysis-en/short-analysis/georgias-path-towards-europe-and-the-west/

Rasi, P., Vuojärvi, H., Ruokamo, H. (2019). Media Literacy Education for All Ages. Journal of Media Literacy Education, 11(2), 1-19. https://digitalcommons.uri.edu/jmle/vol11/iss2/1/

Sikharulidze, V. (2025a). Russian Influence Operations in Georgia: A Threat to Democracy and Regional Stability. Foreign Policy Research Institute (FPRI). https://www.fpri.org/article/2025/03/russian-influence-operations-in-georgia-a-threat-to-democracy-and-regional-stability/

Sikharulidze, V. (2025b). Russian Influence Operations in Georgia: A Threat to Democracy and Regional Stability. Foreign Policy Research Institute (FPRI). https://www.fpri.org/article/2025/03/russian-influence-operations-in-georgia-a-threat-to-democracy-and-regional-stability/

Skolkay, A., Filin, J. (2019). A Comparison of Fake News Detecting and Fact-Checking AI Based Solutions. Studia Medioznawcze, 20(4), 365-383. https://www.academia.edu/41164495/A_Comparison_of_Fake_News_Detecting_and_Fact_Checking_AI_Based_Solutions

Suny, Grigor, R., Djibladze, Leonidovich, M., Lang, Marshall, D., Howe, & Melvyn, G. (2025, 17th March). Georgia (country) | Map, People, Language, Religion, Culture, & History. Encyclopedia Britannica. https://www.britannica.com/place/Georgia/Independent-Georgia

Team, E. (2024, 30th of May). Georgia’s ‘Foreign Agents’ Law: Explained — Eurasia Strategy Insights. Eurasia Strategy Insights. https://sealion-robin-8wal.squarespace.com/snapshots/georgia-foreign-agents-law-explained

The University of Georgia. (2019, 24th of December). Discrediting EU and EU Monitoring Mission in Georgia. eufactcheck.eu. https://eufactcheck.eu/blogpost/discrediting-eu-and-eu-monitoring-mission-in-georgia/

Top Chubashov Center. (2023). The Cold War turned hot between Georgian Dream and Georgian President. https://top-center.org/en/analytics/3559/the-cold-war-turned-hot-between-georgian-dream-and-georgian-president

Transparency International Georgia. (2023). Spreading Disinformation in Georgia, State Approach and Countermeasures. Transparency International Georgia. https://www.transparency.ge/sites/default/files/disinfo-en_0.pdf

Urushadze, M., & Kiknadze, T. (2021). The Relevance of the Actual Values of the Political Actors of Georgia with the Ideologies Declared by Them (Door Caucasus International University & Head of the Doctoral Program in Political Science, Caucasus International University) [Journal-article]. https://www.dpublication.com/wp-content/uploads/2021/06/331-350.pdf

Zacky, E. U., Zacky-Eze, C. J. (2025). Combatting Disinformation: Analysing Effective Communication Strategies and Tactics for Organisational Resilience. Tripodos, 57.

Leave a comment